Stable Diffusion is a deep-learning text-to-image model. Released by StabilityAI in 2022, this model can generate detailed images from text descriptions. The model is free and easy to build upon, and it filters artists’ influence. It is currently being tested by Google, the New York Times, and NBC.

Text-to-image model

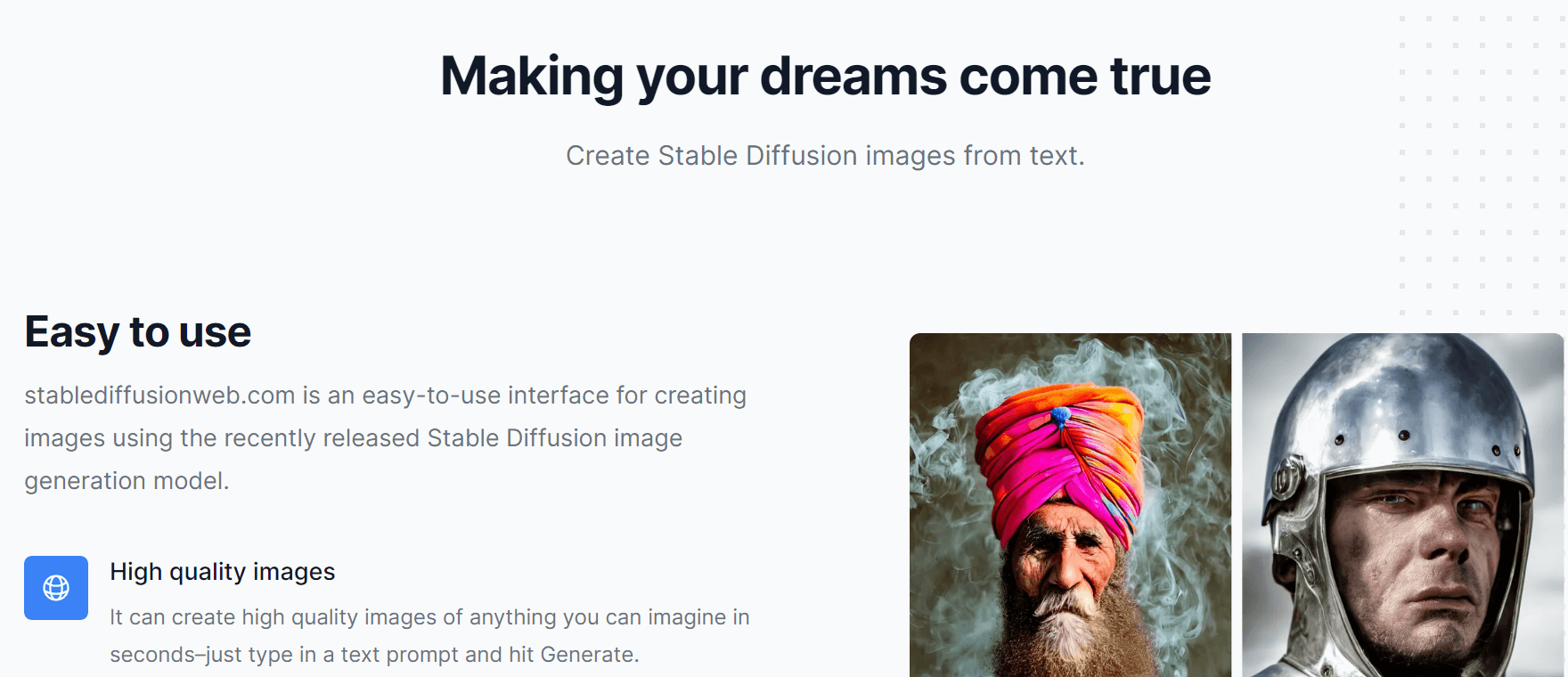

The Stable Diffusion text-to-image model is a powerful tool for producing images from text. Its algorithm is trained on a database of images and text pairs to improve its resolution. It also has the ability to transfer styles from one image format to another. While it may not be the best model for every image generation task, it is a highly effective tool for a number of applications.

A number of problems have been raised by critics about the accuracy of Stable Diffusion. For one thing, the algorithm failed to filter copyrighted work out of its training data. This is a violation of both copyright laws and ethical practices. It was also the subject of controversy when an early viral tweet catalogued the living artists that Stable Diffusion was able to imitate.

The Stable Diffusion text-to-image model is an important breakthrough in computer graphics. It can produce photorealistic 512×512 images and can be executed on consumer GPUs. In addition, it is fast and accurate, and can produce images without pre-processing.

Although Stable Diffusion is a leading text-to-image model, it is free to use and open source. The program was released to the public on August 22nd. Since then, its influence has been growing quietly. While its emergence has been welcomed by the AI art community, it has also been met with criticism from traditional artists.

The Stable Diffusion of Text-to-Image Model is a highly versatile algorithm that produces matching images. It is based on deep learning and generative AI. With this technology, artificial intelligence algorithms can visualize images, voices, and facial expressions. This method creates unbiased results that mimic human behavior.

Free to use

Stable Diffusion is an AI-based text-to-image model developed by Stability AI and Runway with Robin Rombach of LMU Munich. It enables users to create art from text, with a click of a button. The model is based on the latent diffusion model. It was inspired by Katherine Crowson’s research, as well as Open AI’s Dall-E 2 and Google Brain’s Imagen. It is free to use and open source, which means it can be used by anyone. The app was released publicly on August 22nd and has since been widely embraced by the AI art community. While some traditional artists have condemned it as a threat to their livelihood, many others have extolled its capabilities.

This application uses a latent text-to-image diffusion model that is capable of generating photorealistic images from text. It cultivates autonomy and produces extraordinary imagery by empowering billions of people to create their own work. This is a revolutionary method of text-to-image modeling, and it can help you create amazing images. By using Stable Diffusion, you will have the power to create your own masterpieces.

Stable Diffusion is available for free as an open source application, which you can download for free. It requires macOS 15.2 or newer and has an easy-to-use interface. You can download it from GitHub and follow the instructions to install it on your computer. Once installed, the software requires an internet connection and an M1 or M2-based Mac.

Stable Diffusion is released under an open source license, and most users are encouraged to modify it to their own liking. For instance, you can integrate Stable Diffusion with your existing creative tools. You can also share the image prompts you find most useful with other users.

Easy to build on

The Stable Diffusion generative model has a number of options that can be changed to make it more or less effective. Users can modify the height and width of output images and the number of steps required to generate a result. These parameters can be adjusted as high or as low as they wish, but adjusting them higher will mean a longer production time.

Stable Diffusion is free to use and is open-source. It only recently went public, on August 22nd, but its influence has been quietly spreading. Its influence has been praised and derided by traditional artists, and is already starting to change the way people create art. Here are some examples of how Stable Diffusion can be useful.

If you’re interested in building on Stable Diffusion, you can install it on a macOS device with an M1 or M2-powered processor. While this method is not as performant as command-line installation, it is free and easy to install. It’s packaged as a DMG file and can be installed using standard non-AppStore software.

A Stable Diffusion image generation model can run on consumer-grade GPUs and is known for its speed. The model requires at least 10 GB of VRAM to run. It can produce 512×512-pixel images in a few seconds. With this ability, Stable Diffusion has revolutionized the field of image generation. It democratizes image generation by allowing the general public to use a high-performance model without spending a fortune.

In order to train a Stable Diffusion image generation algorithm, a person must gather images with captions and metadata. This creates a large data set. Stable Diffusion’s Stable AI model uses a subset of the LAION-5B image set, which is the largest publicly available dataset for generative models. This means that the model has incorporated the styles of many living artists.

The Stable Diffusion method can scale easily compared to previous methods. However, there are a few limitations of Stable Diffusion, including the fact that it is not as flexible as other image generation frameworks. In addition, Stable Diffusion has built-in biases that make it less effective for some tasks.

Filters artists’ influence

Using Stable Diffusion to filter the influence of artists on your computer’s sound system can be a very efficient way to make sure your audio system sounds as good as possible. The software runs well on most midrange gaming PCs and high-end laptops. The minimum RAM is 16GB and more RAM is better.

The Stable Diffusion model ingests massive amounts of environmental data, incidental data, and data related to a celebrity’s general physical characteristics. This allows it to accurately reproduce people in their entirety within the context of a user’s choice. This means that music, movies, and even the physical characteristics of artists can be effectively reproduced by this new machine learning model.

The new technology has the potential to make image synthesis possible and could impact how visual artists are perceived. While this is an extremely exciting development, there are also risks involved. Stable Diffusion can create images of celebrities, politicians, and real people. For instance, the software could produce images of Barack Obama, Boris Johnson, and even Hitler.

Stable Diffusion has already garnered criticism from artists and critics on Twitter. Its ability to mimic the style of living artists has triggered concerns about copyright and ethics. While the Stable Diffusion team did not advertise this feature, an early viral tweet catalogued the living artists it had successfully imitated. The software has accumulated millions of images of living artists without consulting the artists, raising questions about authorship and copyright.

In addition to the software’s ability to turn a lame drawing into legit-looking art, Stable Diffusion can also be used to fill in blank parts in a picture. The source image serves as a second prompt for the user. While the process is slow and sequential, it can produce state-of-the-art results when training image models.

The algorithm uses a large database of images and text descriptions. Stable Diffusion uses more than five billion image-text pairs in its LAOIN-5B database. In addition to this, it starts out as random noise and gradually edits it until it looks like the target. This enables the system to generate multiple results when a user queries the same image.

Conclusion

If you are still not sure, you can try it out on the official website of your favorite software before deciding whether to buy it. Hope you will like my review.